Modulatory pathways are all you need

You’ve probably heard the term attention. In fact, you’ve probably heard it a lot. Since the onset of transformers, it’s pretty much all people talk about. But where did attention come from? What is it really? Whilst modulatory pathways is not a common answer to either of these questions, I think it’s one that gets to the heart of things.

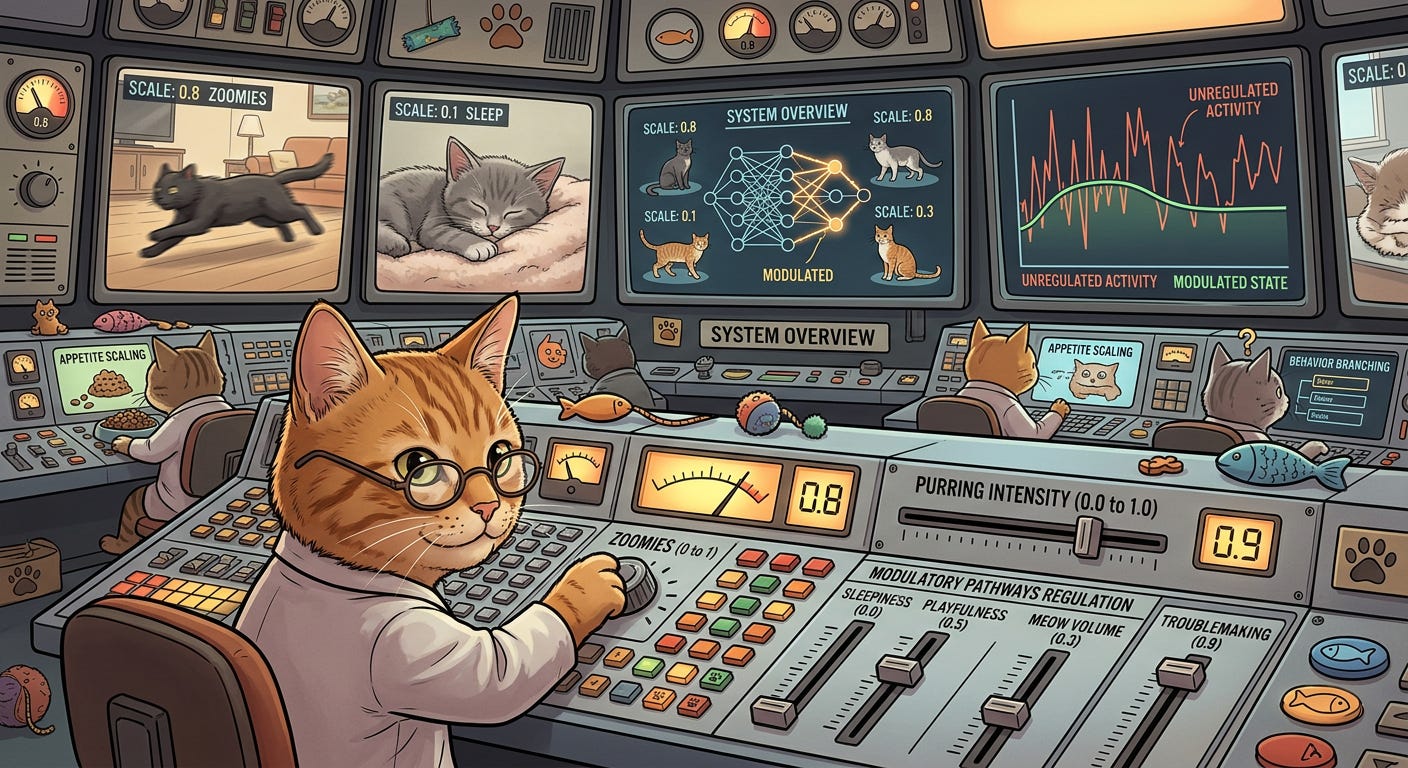

A modulatory pathway is a fragment of a neural network that modulates the behaviour of the broader neural network that it’s part of. They’re usually pretty small compared to what they’re regulating, and they usually modulate the behaviour of the network’s components through multiplicative scaling. Which just means the outputs of a component are multiplied by a number produced by the modulatory pathway. When the scale is zero, the component is effectively turned off; when it’s one, it’s fully active. And importantly, the modulation is dynamic: it’s recomputed for each input, enabling the network to reconfigure itself in a context‑sensitive manner.

The idea of using neural pathways to achieve dynamic modulation has roots in the 1980s, though it didn’t really reach the mainstream until the introduction of LSTM gating in the 1990s. LSTMs use multiplicative gates — which are essentially a handful of neurons — to control the flow of information through a recurrent neural network (RNN), allowing certain components of the information flow to be preserved or ignored depending on context. These gates are trained jointly with the rest of the model, and this is a property that also characterises modern attention mechanisms.

Like gating, early forms of attention were built on top of RNNs. But their purpose was different: they addressed the puzzle of how to give a neural network access to as much information as possible without overwhelming it. This originally came out of machine translation. Prior to attention, RNN-based translation systems worked by encoding text into a fixed-width latent vector and then decoding that vector into text in another language. This sort of worked, but didn’t scale well, since the amount of information transferred from encoder to decoder was limited by the size of the latent vector.

The “give a neural network access to as much information as possible” part of this puzzle was solved by creating additional information sources from every encoder hidden state (one per input token) to the decoder. The “whilst preventing it from becoming overwhelmed” part then involved introducing modulatory pathways that dynamically selected and weighted these additional information sources, rather than passing them all through equally. This dynamic weighting mechanism became known as attention1.

Since then, attention has infiltrated almost all neural architectures. Convolutional neural networks, for example, can also use attention to select and weight information. This usually involves adding small attention blocks2 — in effect, modulatory pathways — after convolutional layers, with the blocks learning via trainable weights which intermediate representations produced by the convolutional filters matter most for the downstream task. In image classification, attention might, for example, dampen the influence of filters that respond to background textures while amplifying those that capture features of the object being classified.

Transformers take this much further. Their modulatory pathways are no longer a small component of the network. They’re almost the whole thing; hence the title of the paper that introduced them “Attention Is All You Need”. A transformer is built from stacks of attention layers that continually modulate information flow. Without getting into details (see Deep Dips #3: Transformers for a primer), this involves trainable weights being used to compute relevance scores between every pair of positions in the input — basically lots of small modulatory pathways. These act as dynamic scaling factors that determine how strongly different pieces of information influence one another, allowing the network to reconfigure itself as it runs.

Other modulatory pathways can be found in mixture-of-experts (MoE) transformers, which are widely used in modern LLMs. These models contain multiple parallel neural networks — the experts — and a gating mechanism that dynamically selects which experts should be active for a given input. Experts irrelevant to the current context are turned off, saving computation; relevant ones are activated. As before, this modulation is done by small trainable pathways that output multiplicative scaling factors.

So, within artificial neural networks, modulatory pathways have become a useful mechanism for solving a range of practical problems. And although these ideas were not primarily motivated by biology, modulatory pathways also play a central role in the brain. Neuromodulators such as dopamine and serotonin are released as a result of neural activity. They then diffuse in the brain and cause functional responses elsewhere, operating as large‑scale modulatory pathways that tune the brain’s own computation in a context‑dependent way. There were some promising early neural networks, such as GasNets, that explicitly explored this mechanism.

Modulation is also a fundamental organising principle in biology beyond the nervous system. Cells, tissues, and even whole organisms rely on modulatory signals to flexibly reconfigure their behaviour without altering their underlying structure. Many years back, I led a project developing neural‑network‑like architectures inspired by modulatory processes within cells3. One of the interesting things we discovered was that modulatory pathways could significantly reduce network size by allowing components to dynamically take on different roles depending on context, meaning that very compact systems could do complex things. Not a bad lesson to learn in the context of modern day behemoth AI systems.

So, whilst the conventional AI wisdom these days is that attention is all you need, I prefer to take a broader view: modulatory pathways are all you need4.

This idea was first described in this 2014 paper by Bahdanau, Cho, and Bengio.

Convolutional Block Attention Module (CBAM) is the most common variety.

We called these artificial biochemical networks. They never really took off, but if you want to know more, take a look at this overview paper.

Though not really. Even transformers still contain plenty of other stuff, such as MLP layers. The name of the paper was really getting at the fact you don’t need recurrency to produce effective models, since this was the dominant approach in machine translation at the time. Although it turns out that recurrency is still important if you want to process really long contexts — state space models like Mamba basically re-introduce recurrencies as a way of handling long context.